Jeff Bezos has said “To invent you have to experiment and if you know in advance that it’s going to work, it’s not an experiment.” By that definition Amazon Scout is an enormous experiment.

Jeff Bezos has said “To invent you have to experiment and if you know in advance that it’s going to work, it’s not an experiment.” By that definition Amazon Scout is an enormous experiment.

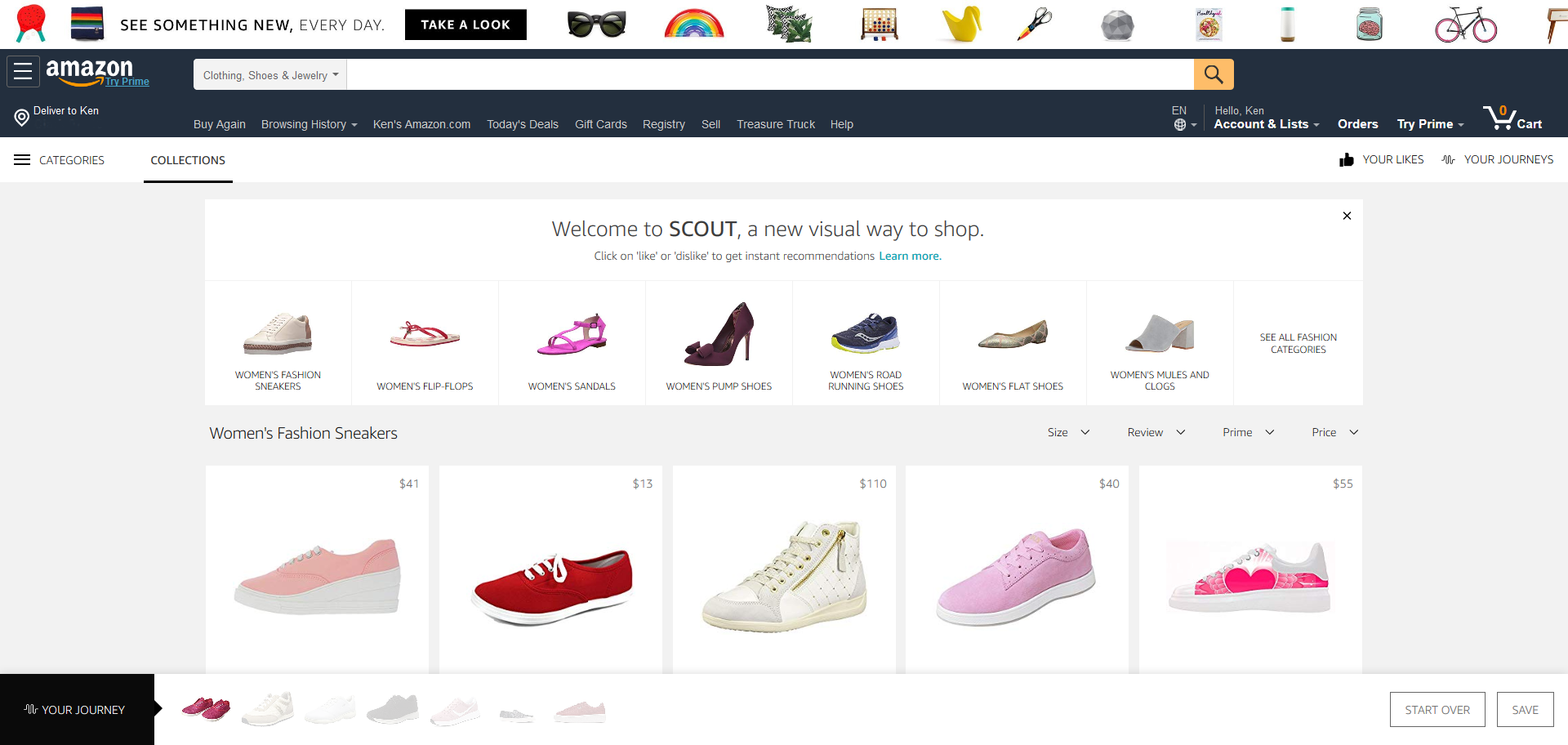

Scout is a new visual search engine of sorts (within Amazon). It’s aimed at categories where visual appeal is a big part of shoppers’ selection criteria, so furniture, women’s shoes, and lighting are early subjects. Shoppers choose a product subcategory and then give a thumbs up or down to each product image with price. It seems like a simple and intuitive method of culling preferred items from a giant list of possibilities —it is, but without a massive rework, it will be a failed “experiment.”

The system utilizes machine learning—a form of AI that “learns” from criteria (in this case, a like or dislike) and then makes replacements to the displayed assortment based on similarities or contrasts to the user-ranked item. The more rankings, the more tuned the selection, until, in theory, the product choices displayed are largely purchase candidate items that meet the shopper’s desires.

The major breakpoint is that humans rarely make absolute judgements when it comes to tasks like shopping, especially when there is an emotional component to the goods being considered. Scout forces the normal expanse of gray area multifaceted decision making into one of black or white, which may for shoppers result in unwanted results, time/effort wasted, disappointment, frustration, and/lost discovery opportunities.

And, Scout is further hindered by a cluster of shortcomings including:

- the system’s inability to ascertain the reason for a like or dislike (price, appearance, color, texture, size, etc.)

- the possibility that the algorithms that select what replacements to make may be biased or outright flawed (unintended bias is a huge concern with algorithm design)

- whether there is sufficient/correct product metadata to effectively support the algorithm’s replacement selection process

- (seemingly) no input from a shopper’s prior purchase history

- no convenient access to supplemental product selection attributes including star ratings and user reviews

- expecting users to shop one way for visually oriented items and another for other items—a UI design fail

- Scout is likely as time consuming and probably more, than a well-structured traditional filtered search is at uncovering candidate products and it can lead to a dead end

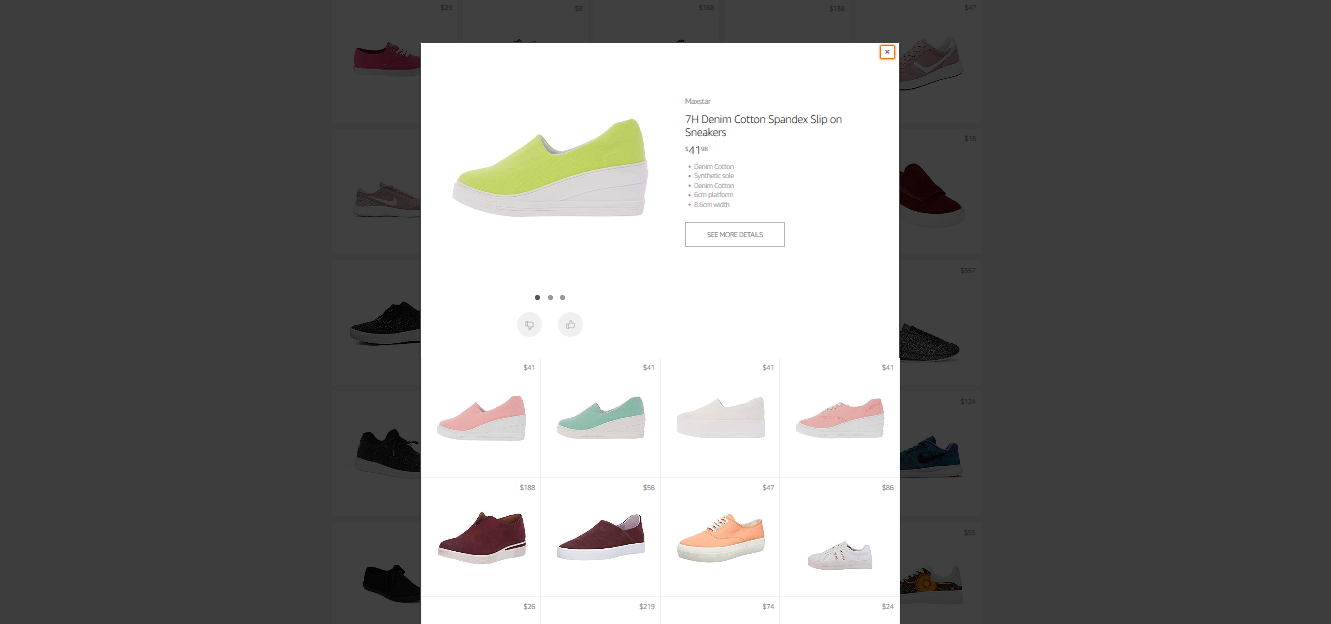

Scout does allow users to see a “Quick View” pop-up window of product attributes and then within that, either select “See More Details” which opens the standard product description page in a new browser tab, or rank a sub-selection of related products. Surprisingly, ranking the new products apparently does not change the main Scout results page when a shopper returns there. It does populate on the “Your Journey” visual history of sorts in the footer, that allows deselected items to be either quick viewed in a new pop-up window or removed from disliked status, resetting the main Scout results or not, depending upon where the product view originated.

Scout does allow users to see a “Quick View” pop-up window of product attributes and then within that, either select “See More Details” which opens the standard product description page in a new browser tab, or rank a sub-selection of related products. Surprisingly, ranking the new products apparently does not change the main Scout results page when a shopper returns there. It does populate on the “Your Journey” visual history of sorts in the footer, that allows deselected items to be either quick viewed in a new pop-up window or removed from disliked status, resetting the main Scout results or not, depending upon where the product view originated.

If all of that sounds fairly complex and cumbersome, it is. Additionally, it makes for many screen touches—a rudimentary UX design fault that smacks the principle of creating user delight upside the head. In fact, going beyond simple likes and dislikes generates an experience that reeks of a half-baked product that forgoes a properly considered, tested, and iterated, user-centered design.

Conceivably, if as I believe, Scout will be a washout, not all is lost. In fact, Bezos has said “If you want to be inventive, you have to be willing to fail.” So using some inventiveness, I think the core concept can morph and add utility:

Some critics of Alexa as a shopping tool reference users’ inability to get a good sense of a product they are unfamiliar with from Alexa’s terse aural descriptions—and they have a point. Alexa does need much work to become a viable shopping assistant. But pairing Alexa with the general premise of Scout on the Echo Show device or any device with Alexa and a screen can leverage the best of both. Here’s an illustration:

User: “Alexa show me patio chairs under two hundred dollars.”

Alexa: “Displaying patio chairs under two hundred dollars.”

User: “No. Only lounge chairs.”

Alexa: “Displaying lounge chairs under $200.”

User: “Similar to the third one in the second row, but no yellow chairs.”

Alexa: “Do you like this selection?”

User: “I like the way the second and third look, but am unsure how sturdy they are.”

Etc.

In the example, the visuals add understanding that brief verbal descriptions lack and the user’s natural spoken language provides an intuitive means to filter search criteria and provide meaningful shopper insights to Alexa.

And BTW, Amazon just announced Alexa Presentation Language (APL) which “…enables you to build interactive voice experiences that include graphics, images, slideshows, and video, and to customize them for different device types.” It sure makes my premise for salvaging the benefit of Scout as an Alexa enhancement a very viable outcome.

But until my predicted Alexa Scout union, expect the usual poorly analyzed press hype around anything Amazon and the rarely reported Amazon customer frustration.

Try it for yourself for a real purchase and see how valuable it is to you.