Before there was Alexa, there was ALisA. Well, both were in stealth development simultaneously.

In some ways this project is similar to Alexa, but it was also very different and had a roadmap for HUI (Humanized User Interface) features that are not yet available in any voice activated AI assistant. ALisA was conceived as a subscription SaaS application. She was designed to run on a laptop, desktop, or mobile device. She had a very user-centric commitment in that usage and data would always be strictly private and never monetized. She would maintain state, remembering prior discussions/events/actions/next steps and could adjust pending tasks leveraging that capability.

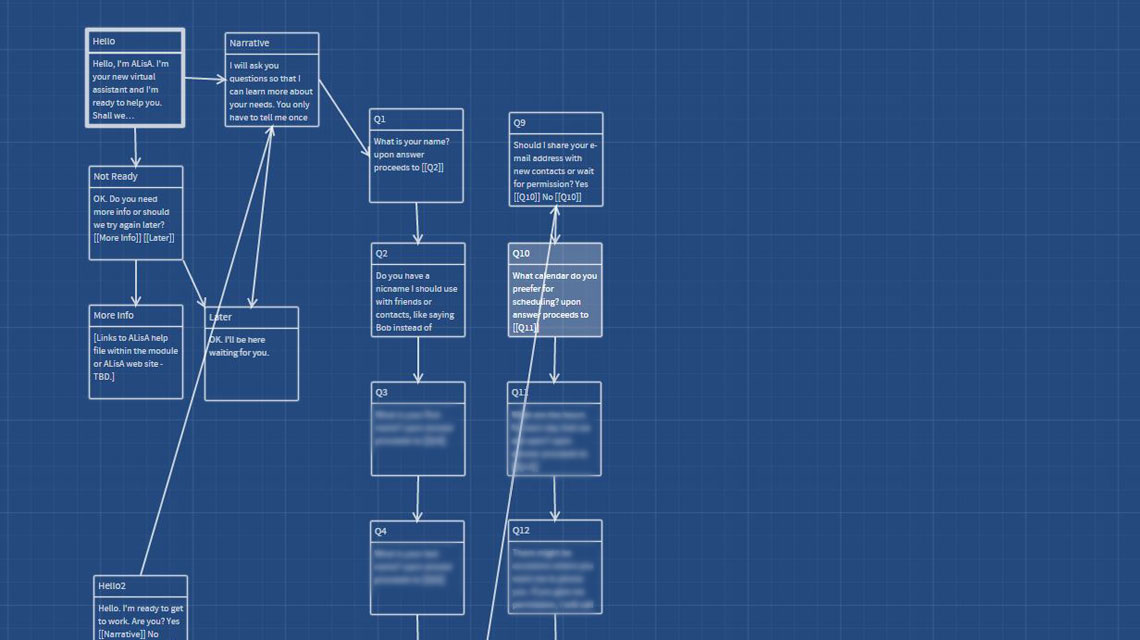

AlisA can be considered my baby since my primary role was that of Product Manager, creating the roadmap and most of the elements to support it, including market analysis, business modeling, concept development, feature ranking, technology research, app functionality design, voice interface design, and dialog design. In the process I constructed a proprietary algorithmic fault management logic that enabled ALisA to know (self awareness?) at what point she was lacking knowledge (data) and how to deal with it. (Thus the blurred responses in the dialog diagram.)

Tech that my ALisA roadmap incorporated:

- VUI [Voice User Interface]

- sentiment analysis [voice and facial]

- gaze tracking

- gesture recognition [hand and body]

- body positioning

- permission based habit/task tracking/execution

- fault management

- associate recognition/validation

- home automation integration

- more…

The scale of such a project is massive, particularly where AI meets multi-interaction modes, so with the advent of seemingly competitive products from major technology companies [not really, I’m just being nice], a decision to halt development was made. Still, years later, those competitors have (so far) fallen short of ALisA’s roadmap and absolutely do not have the very crucial intelligent fault management system and level of state/context remembrance.

Prev

Prev

Next

Next